Agentic coding makes strict static analysis non-negotiable.

I love static analysis. It catches bugs you didn't know could've been bugs, and it enforces conventions that would otherwise rely on human discipline and code review.

For PHP, PHPStan has been the standard for years. It's incredibly good, like a combination of TypeScript and ESLint for PHP. I put together a living reference page for the strictest PHPStan config I could come up with, and this article explains the thinking behind it.

Static analysis as an AI guardrail

Before agentic coding, strict static analysis was a nice-to-have for code quality. You'd configure PHPStan and catch a class of bugs that tests alone wouldn't find. It was a good practice.

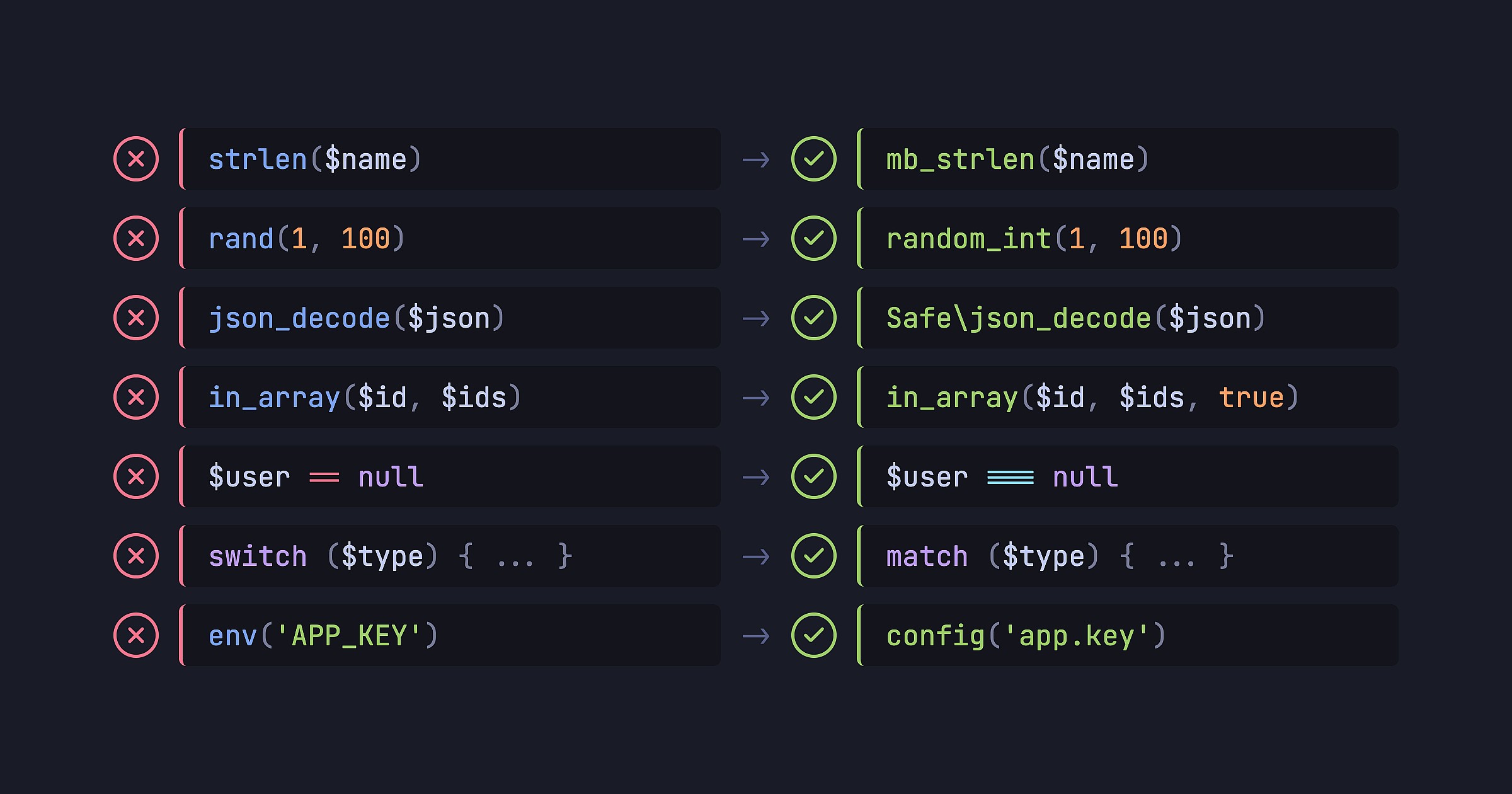

But now, with AI generating the bulk of your code, strict static analysis should be looked at as the primary mechanism for enforcing standards. You can tell an LLM "use strict comparisons" in a system prompt, and it'll do it... but not ALL of the time. Static analysis catches it 100% of the time.

I sincerely believe deterministic code quality tools are non-negotiable in this day and age, and making good use of them should be part of our "new" job expectations. AI doesn't write code like a human in the sense that it doesn't learn from what you tell it. Someone who's been on my team for 5 years knows exactly everything about our coding standards, the patterns we use, the mistakes we make, and the way we structure code. An LLM is constantly starting from scratch, and even when it isn't, it will sometimes just "forget" things that you explicitly told it and say "You're absolutely right!" when you point that out. You need tooling to catch those kinds of mistakes.

Cognitive complexity limits are a good example. Without them, LLMs will happily keep making functions and objects longer and longer as they work through issues. God objects out the wazoo. AI doesn't care, it's just trying to solve whatever task you gave it. But if you set a complexity limit, and the LLM is forced to decompose, it will break the logic into smaller units and come up with better-organized ways of doing things to meet its target. I've seen the refactors that those limits led to, and it's really nice.

A human would grumble about having to refactor everything they just wrote, but an LLM doesn't care. You can make it jump through whatever hoops you can imagine, and you will end up with much better code.

So the answer is: Come up with as many hoops as you possibly can! If you need better quality code, encode your standards and then force AI to meet them.

The config

I put together a PHPStan configuration for this reality: level 10 and extra strictness packages on top, tuned for catching as many things as possible. The config is opinionated, but I lay out the reasoning behind every choice in the reference.

It covers:

- Every rules package, what it catches, and why it matters for AI-generated code specifically.

- The full annotated

phpstan.neonwith the reasoning behind each section. - Disabled rules with the pragmatic reasoning (ergebnis ships rules like "no extends" that conflict with Laravel's architecture).

- Ignored errors, each with the specific reason it's a false positive or framework pattern.

If you're writing Laravel applications with AI assistance (and at this point, most of us are), strict static analysis is the single highest-leverage guardrail you can add. The config is ready to drop into any Laravel project.

Note: When adding it into an existing project, this config will lead to tens of thousands of errors to fix. It's a huge lift that'll scare even the most disciplined teams. If only you had a code generation tool to automatically fix those errors... 😉

The full config with every rule explained is at pocketarc.com/phpstan. It's a living page, and I'll keep updating it as the configuration evolves. I'd love to hear what you think. Reach out via email or X/Twitter.